Author Archives: Craig Anderton

Add Lookahead to the Fat Channel Compressors

The Fat Channel is a versatile channel strip plug-in that, because of all the other cool Studio One individual processors, is easy to overlook. But it has several outstanding features, including the ability to choose from a variety of compressors—sort of like plug-ins within a plug-in (fig. 1). Three are stock; the rest are optional at extra cost from the PreSonus shop.

However, none of these compressors has a lookahead feature. Lookahead delays the audio we hear, but the compressor monitors the audio in real time. Thus, the compressor knows in advance when it needs to apply compression. Without lookahead, if you’re using heavy compression (like for guitar sustain), you’ll hear a nasty pop because the compression can’t kick in until the audio exceeds the threshold—and by that time, it’s too late. Some of the audio has already passed through uncompressed, which causes the pop. The first audio example exhibits this pop. Also note that the first and second audio examples are both normalized—but this one sounds really soft, because the pops are so loud you can’t raise the level any higher without bumping against the headroom.

The solution is simple. What’s more, it applies to not only the Fat Channel, but any dynamics processor, from any manufacturer, that doesn’t have lookahead.

Create a bus, and insert the Analog Delay. Edit the parameters for 2 ms of delay, delayed sound only, and no modulation (fig. 2). Insert the Fat Channel after the Analog Delay, and choose your favorite compressor.

At the audio track you want to process, create two pre-fader sends. One goes to the bus, and the other to the Fat Channel’s sidechain. Turn down the track’s fader so you hear only the audio coming from the bus. This accomplishes our goal: The audio applies compression to the Fat Channel 2 ms before the audio enters the compressor. So, the compressor is primed and ready to go when a transient hits (fig. 3).

Now compare the next audio example to the first one—the nasty pop is gone. Yeah! Also notice how it’s much louder, because the headroom doesn’t have to accommodate a pop.

However, there’s a catch. Studio One plug-ins with a lookahead function delay the signal by 1.5 ms, but apply plug-in delay compensation so that all the other tracks are delayed by 1.5 ms. This keeps the tracks in sync, and you don’t really notice a delay this short. However, our “faux lookahead” doesn’t have plug-in delay compensation.

Then, move the track forward on the timeline by 2 ms to align it with the other tracks. You can do this via the Delay setting in the Event Inspector (F4).

So…What’s the Deal with Aux Channels?

Hardware is making a comeback. Real-time, improvisation-based drum machines and synths are gaining popularity, and you can find occasional bargains for used synths that were top of the line only a few years ago. So, it makes sense that Studio One would want to simplify integrating external hardware synths (similarly to how Pipeline integrates external hardware effects).

When Version 5 introduced the Aux Channel, comments ranged from “So great—I’ve been wanting this for years!” to “why not just feed the instrument into audio tracks?” Well, they’re both right—Aux Channels are about workflow with external hardware synthesizers. But Aux Channels can streamline workflow and simplify setup, compared to assigning the instrument outs into audio tracks, which then route to the mixer.

Aux Channels monitor external audio interface inputs directly, not the outputs from recorded tracks, in the mixer. These external inputs can be any audio. For example, when mixing, you might want to listen to a well-mixed CD for comparison. You don’t need to record this as a track, just monitor the inputs it’s feeding as needed.

Aux Channel Benefits

My favorite feature is that you can add a hardware synthesizer to the External Instruments folder (located in the Browser’s Instruments tab), and drag and drop the hardware synth into the arrange view—just like a virtual instrument. This automatically creates the Aux Channel, and sets up the Instrument track as you saved it. Set up the external synth once, then use it any time you want.

Also, the external instrument needs only the Note data track in the Arrange view—audio tracks are unnecessary because you’re just going to mix them in the console anyway. This is consistent with Studio One’s design philosophy of dedicating the Arrange view to arranging, not mixing. Of course, you can show/hide audio tracks in the Arrange view, but it’s more convenient to have those audio tracks show up directly in the console, like your other audio sources.

How to Create an Aux Channel

First, your hardware sound generator needs to be set up as an external Instrument in the Options (Windows) or Preferences (Mac) window. Then, in the Console, click on External toward the lower left. Click the downward arrow for the desired External Device, and choose Edit. (Note that if it’s a workstation that combines a sound generator with a keyboard, you should have two entries—one for the Keyboard, and one for the Instrument. Choose the instrument.)

When the control mapping window appears, click on the Outputs button (the one with the right arrow; see fig. 1), and choose Add Aux Channel.

After the Aux Channel appears, assign its input to the audio input(s) being fed by the hardware synth. For example, if the synth’s audio is going to stereo input 3+4, then choose that stereo input. (If your existing Song Setup I/O doesn’t include an easily identifiable name for the inputs being used for the Aux track, considering doing some renaming.)

Next, save these default settings. If needed, click on Outputs again to bring up the controller mapping window, and click on Save Default. Saving it is what allows the hardware instrument to show up in the Browser.

All Ready!

In the Browser’s Instruments tab, look under External Instruments (toward the top, just under Multi Instruments). Drag your instrument into the Arrange view, and start playing. If you don’t hear anything, the likely causes are either that the keyboard being assigned to the synth isn’t the default keyboard (specify All Inputs for MIDI in, and it should work), or the Aux Channel input hasn’t been assigned to the correct audio interface inputs.

Also note that with workstations whose keyboard drives the sound generator, turn off the Local Control parameter (usually in the instrument’s MIDI setup menu). Otherwise, you’ll be playing the sound generator from the keyboard, and Studio One will also be feeding it notes. The result is note double-triggering.

Bouncing

To preserve what the Instrument track plays as an audio track, choose a track’s Transform to Audio Track option, or select the Event and bounce to a new track (fig. 2).

When bouncing, make sure that the Record Input is assigned to the Aux Channel where the Instrument’s audio appears. Finally, Studio One knows that because the bounce involves external hardware, the bounce must be done in real-time (faster than real-time bouncing is possible only with virtual instruments that live inside the computer). Happy hardware, everyone!

Amp Sims: Garbage In, Garbage Out

An astute Friday Tip reader commented that while the tip on how to level the outputs of amp sim presets was indeed useful, I should also write about the importance of input levels. Well, I do take requests—and yes, input levels are crucial with amp sims.

Physical amp sims are forgiving. They soak up transients, and chop off low and high frequencies. But amp sims tend to magnify the differences between guitars and playing styles. When going through the same preset, a player who uses a thin flat pick, 0.008 strings, and single coil pickups will sound totally different compared to a player who uses a thumbpick, 0.010 strings, and humbuckers. So, let’s look at four common mistakes people make when feeding amp sims.

- Dialing up presets created by someone else. You have no idea what kind of input level the amp sim expects, so you’ll almost certainly need to edit at least some parameters (particularly the input drive or level).

- Too much gain. Excessive gain generates nasty distortion, not the “good” distortion an amp creates. You’ll also have issues with decreased definition, potential aliasing, and a sound that splatters all over a mix. Check out the audio example, using Ampire’s Painapple amp.

The first half has the input set to 5 o’clock. Not only is the sound so distorted the playing is indistinct, listen to the very beginning, before the first note hits. All that gain is picking up noise, hum, and garbage that becomes part of your guitar signal. No wonder the amp sim sounds like garbage—it has plenty of garbage mixed in. The audio example’s second half has the input at 9 o’clock. The sound is not only more focused, but stronger.

- Inconsistent levels. Amp sim plug-ins are always re-amping—the guitar track is dry. Because amp sims are so dependent on levels, consistent sounds from presets require consistent track levels. I normalize my dry guitar tracks to -3 dB, and then my presets know what to expect. Also, note that Event level adjustments are before the amp sim. Sometimes all that’s needed to optimize the guitar sound is to lower the Event level in places where you want more definition, and raise it when you prefer heavier distortion.

- Too many low and high frequencies. Guitar amps were never about flat frequency response. Rolling off lows below the guitar’s range keeps out bass energy that has nothing to do with your playing, and rolling off the highs a bit simulates the high-frequency loss through long cables—something amp sims don’t emulate. In the next audio example, the first half is the same overly distorted sound as the previous audio example’s first half. The second half doesn’t change the input level, but rolls off the lows and highs with the Pro EQ, prior to the amp sim. Fig. 1 shows the Pro EQ settings.

In particular, listen to the spaces between notes. The version without EQ has a sort of bassy mud between notes that detracts from the part’s focus.

The bottom line is simple: If your amp sim doesn’t sound right, the quickest fix might be as simple as turning down the input level, and rolling off some lows and highs before the amp sim.

Why You Want to Level Ampire Presets

It’s great we can store presets, trade them with other users, and download free ones. But…when selecting different Ampire presets to decide how they fit with a track, you want their levels to match closely. Then, your evaluation of the sound will be based on the tone, not by whether the preset is softer or louder. However, consistent preset levels are not a given.

Having a baseline level for presets, so you don’t need to change the level every time you call up a new one, is convenient. You can’t really use VU meters for this, because you want sounds to have the same perceived level, which can be different from their measured level. For example, a brighter sound may measure as softer, but be perceived as louder because it has energy where our ears are most sensitive.

My standard of comparison is a dry guitar sound, because I want the same perceived level whether Ampire is enabled, or bypassed. You might prefer to have Ampire always be a few dB louder than your dry guitar—whatever makes you happy.

Enter LUFS

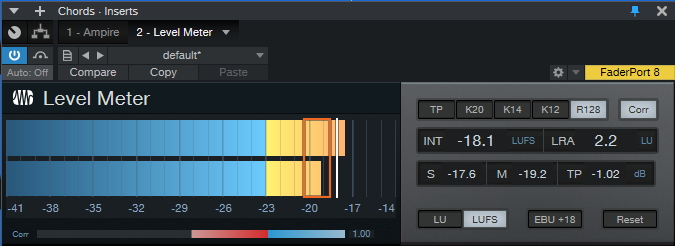

The LUFS (Loudness Unit Full Scale) measurement protocol measures perceived loudness. LUFS measurements allow streaming services like YouTube, Spotify, Apple Music, and others to adjust the volume of various songs to the same perceived level. This is why you don’t have to change the volume every time a different song shows up in a playlist. The system isn’t perfect, but it’s better than dealing with constant level variations. Fortunately, Studio One has a Level Meter plug-in that gives LUFS readings (fig. 1).

The Process

- Record at least two Events—one for chords, and one for single-note leads. If you use bass presets, you’ll also want an Event with a bass line. Record about a 15 second clip of continuous guitar chord progressions, and another clip with 15-30 seconds of single notes (and/or bass lines), also without pauses.

- Insert the Level meter after Ampire, and enable its LUFS and R128 buttons. Set the appropriate event to loop, and start playback.

- With Ampire bypassed, adjust the Event’s level for a nominal LUFS reading. I use -18 dB because that works well with Ampire, Helix, and other amp sims. After setting your standard level, it’s a good idea to lock the Events from editing (context menu > Toggle Edit Lock).

We interrupt these steps to bring you an important bulletin: The Level Meter reading that matters is the INT field. This averages out the audio, so you’ll see the reading change at first, and then settle down to a consistent LUFS reading. When you change levels, call up a different preset, or make any changes, click on the Reset button to re-start the averaging process. When doing any LUFS measurements, you can’t be sure the reading is correct until you’ve a) hit Reset, and b) played the event several times, which is why we want to loop it.

We now return to the step-by-step procedure.

- Enable Ampire, and call up a preset. Hit the Level Meter’s Reset button, and after the Event has played through a couple times, check the LUFS meter reading.

- In my case, an LUFS reading of -20 would mean the preset level is about 2 dB lower than my standard -18 dB level. So, I’d raise Ampire’s output control (fig. 2) by 2 dB. On the other hand, if the LUFS reading was -14 dB, it would be about 4 dB louder than my standard. I’d instead need to lower the output level control by 4 dB.

- After adjusting the level, hit Reset on the Level Meter, and play the loop a few times. If the reading is the same as the nominal value…done! Otherwise, re-tweak.

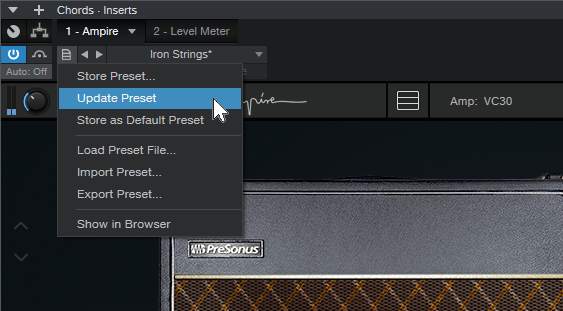

- The final step is to click on Update Preset (fig. 3), so you don’t have to do this again.

And while you’re at it, save the Song you used to do this testing. Then you can call it up again in the future, when you want to match preset levels.

Okay, so it took a little time to balance all your presets. But when deciding what preset to use in the future, you’ll be glad you set them all to a baseline level.

Studio One’s Session Bass Player

Studio One includes multiple algorithmic composition tools, but I’m not sure how many users are aware of them. So, let’s look at the way some of these tools can help expedite the songwriting process.

I’ve always prioritized speed when songwriting, because inspiration can disappear quickly. But, I’ve also found that good guide tracks (e.g., cool drum loops instead of metronome clicks) increase the inspiration factor. The trick is to create good guide tracks, without getting distracted into editing a guide tack into a “part.”

A solid bass line helps drive a song, but when songwriting, I don’t want to take the time to grab a bass and do the necessary setup. The blog post Studio One’s Amazing Robot Bassist describes a simple way to create a bass part by hitting notes on the right rhythm, and then conforming them to the Chord track. This week’s tip builds on that concept to let Studio One’s session bass player get way more creative, and generate parts even more quickly.

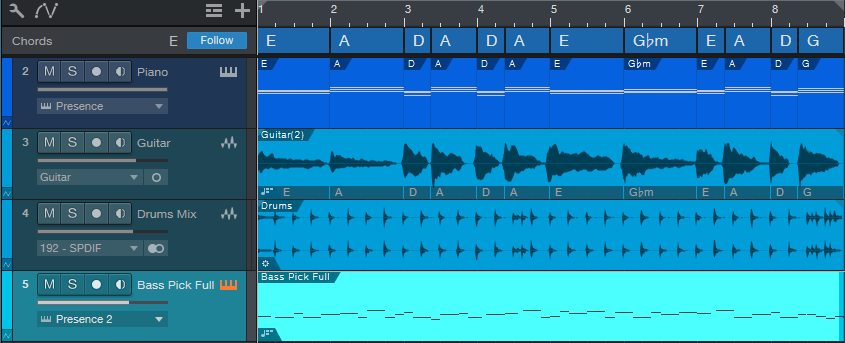

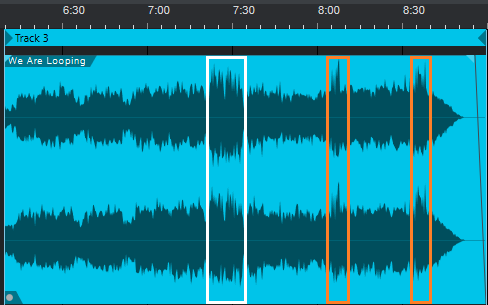

Fig. 1 shows a chorus being written. The process started with a rhythm guitar part (track 3), which I dragged up to the Chord track so it could parse the chord changes. I made a few changes to the Chord Track, then dragged it into a piano instrument track. This deposited MIDI notes for the chords, so now the piano played the chord progression. I then had the guitar follow the new Chord track, and the guitar and piano played together. Next up was adding a drum loop.

As to the bass part, there are three elements to having Studio One create a bass part.

1: Fill With Notes

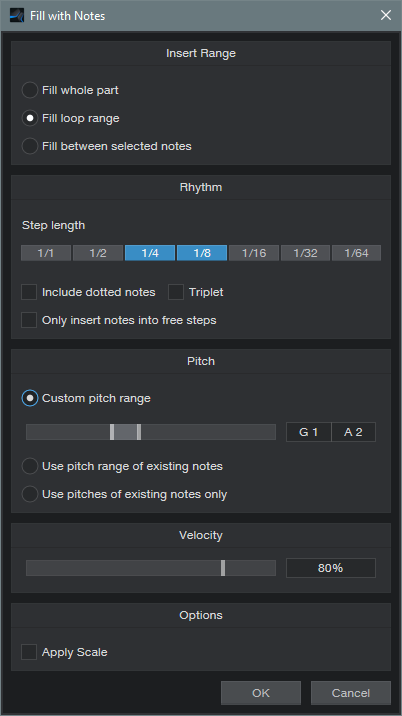

After selecting the bass’s blank Note Event, open the Edit Menu and choose Action > Fill with Notes. You’ll want to customize the settings for best results (fig. 2).

For example, choose a bass-friendly pitch range. For this song, I also wanted a fairly bouncy part, so the rhythm is made up of 1/4 and 1/8 notes. You might also want some half-notes in there. Click on OK, and you have a part.

Every time you invoke Fill with Notes, the notes will be different. If you don’t like the results, delete the notes, and try again. But don’t agonize over this—all you really want is notes with a rhythm you like, because the Chord Track and Scale will take care of the pitches.

Also note that if you don’t like the notes in, for example, the second half but like the first half, no problem. Delete the notes in the second half, set the loop to the second half, and try again.

2: Chord Track

Now have the part follow the Chord Track. Your follow options are Parallel, Narrow, and Bass. I usually prefer Narrow over Bass, but try them both. Now the notes follow the chord progression.

3: Choose and Apply Scale

This may not be necessary, but if the part is too busy pitch-wise, specify the scale note and choose Major or Minor Triad. Then, choose Action > Apply Scale. Now all the notes will be moved to the first, third, or fifth. Of course, you can also experiment with other scales—but remember, the object is to get an acceptable bass part down fast, so you can move on to songwriting.

Fig. 3 shows a bass part that Studio One’s session bass player “played.” This was on the second try.

Figure 3: The session bass player came up with a pretty cool part. I applied Humanize to the velocities so it didn’t sound too repetitive.

Finally, let’s hear the results. The vitally important point to remember is that all this was created, start to finish, in under four minutes (which included tuning and plugging in the guitar). The end result was some decent guide tracks, so I could start work on the ever-crucial vocal lead line and lyrics. Thank you, session bass player!

Percussion in Motion

I’m a fan of hand percussion. Tambourines, cowbells, claves, guiros…you name it. But mixing it just right is always tricky. When mixed too high, the percussion becomes a distraction. Too low, and it might as well not be there. The object is to find the sweet spot between those extremes.

My solution is simple: use X-Trem’s Autopan function to give motion to percussion. The following audio example has cowbell (no jokes, please) and tambourine. The cowbell keeps a rock-solid hit on quarter notes, and is panned to an equally rock-solid center. But the tambourine is a different story. I’ve mixed both of them higher than normal, so you can clearly hear how they interact.

In the first half, both the tambourine and cowbell are panned to center. After a brief gap, the section repeats, with X-Trem moving the tambourine back and forth in the stereo field. In a real mix, both percussion parts would be mixed lower, so the tambourine’s motion would be something you sensed rather than heard. The end result is a feeling of more motion with the percussion, because the tambourine’s wanderings keep it from becoming repetitive.

X-Trem Setup

First things first: the track must be stereo. If you recorded it in mono, set the Channel Mode to stereo, select the clip, and type ctrl+B to bounce the clip to itself. This converts it to stereo.

I prefer not to have a regular, detectable panning change. A random LFO waveform would be ideal, but the X-Trem’s 16 Steps waveform is equally good. Slower pan rates are better, because you don’t want the pan position to change so fast that a percussion hit pans while it’s still sustaining or playing. I’d recommend 2 beats (changes every 1/8th note, as in the audio example) or every 4 beats for slower tempos or percussion that sustains.

Draw a pattern that’s as close as possible to seeming random (fig. 1). This prevents the panning from becoming repetitive.

Placement

If you want the panning to move around the center, fine—pan the track to center, and you’re done. But if you want the panning to move (for example) between hard left and center, remember that the pan control becomes a balance control in stereo. So if X-Trem pans the audio more toward the right, it will become quieter. To get around this, pan the channel to center, but follow the X-Trem with a Dual Pan that sets the actual panning range. Fig. 2 shows settings for panning between the left and center, while maintaining a constant level.

As to the amount of X-Trem modulation depth…it depends. If you’ve been to my seminars where I talk about “the feel factor” with drum parts, you may recall that I like to keep the kick right on the beat, and change timings around it. A similar concept holds true with panning percussion. In the audio example, the cowbell is the anchor, and the tambourine dances around it. If both are moving, the parts can become a distraction rather than an enhancement.

Now you know how to make your percussion parts tickle the listener’s ears just a little bit more…and given the audio example, I’m proud of all of you for not stooping to a “more cowbell” joke!

Sneaky Mastering Page Tip

For this tip to make sense, I need to be upfront about two personal biases.

Personal bias #1: Drums should sound percussive. I can’t remember the last time I used compression on drums (although limiting or saturation is helpful to shave off extreme peaks).

Personal bias #2: I avoid using bus processors. The mix has to sound great without any bus processors, so the mastering process can take the mix to the next level.

Why I have these biases would take up a whole other tip. Besides, if your music sounds great with bus processors and compressed drums, then by all means—keep using bus processors, and compress your drums!

But There’s a Problem: “Super Peaks”

As a result of my biases, I send mixes with a wide dynamic range to the Mastering page, because that’s where I can control the precise amount of dynamic range processing in context with other related pieces of music. But, not restricting dynamics means that from time to time, there are “super peaks”—for example, if the kick, snare, cymbal, keyboard stabs, and a guitar power chord all hit at the same time (fig. 1).

These super peaks go way higher than most peaks, so if you normalize, the super peaks prevent any significant peak level increase. Using limiting or compression on the track works, but alters the percussive character. Of course, the beauty of the Song/Mastering page synergy is that you can tweak the mix in the Song page, and the Mastering page will reflect the results. However, with super peaks that combine multiple instrument sounds occurring simultaneously, tracking down which tracks to reduce, and by how much, gets complicated—especially if you have to deal with a dozen or so super peaks.

The Gain Envelope is the perfect tool for dealing with super peaks. You can bring down the peak and the area immediately adjacent to it, without neutering the percussive waveform (fig. 2). The Gain Envelope just lowers the peak’s level a bit—it doesn’t flatten the peak.

Although the Mastering page doesn’t have Gain Envelopes, there’s still a way to apply that Song page advantage to the Mastering page. Usually, audio goes from the Song page to the Mastering page. In this case, we’ll do the reverse.

- Create a new Song.

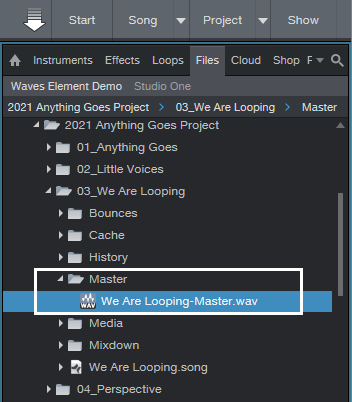

- Open the Browser, and unfold the Project’s folder. Then, unfold the Song with the file that needs editing, and open the Song’s Master folder to expose the file used by the Mastering page (fig. 3).

- Drag the Master file into the new Song, and start editing.

- Use the Gain envelope to bring down the super peaks. While the file is open, you can make any other needed changes (e.g., increasing the level slightly at the beginning to pull listeners into the music).

- After making your changes, rename the existing Master file to something like “Song-Master Old.wav.” Then, drag the edited file from the Song into the Master folder, and rename it with the original name, like “Song-Master.wav.” Now your project will think the modified file is the Master file (fig. 4). Once it checks out as okay, you can delete the old Master file.

Figure 4: The original file is on the left. The version on the right had the super peaks reduced, and was then re-normalized.

Mission accomplished! Both files in fig. 4 were normalized, but compare the file on the right—it’s more consistent and louder, yet the dynamics remain intact. It was necessary to change only the levels of ten super-peaks by about -3 dB. I also brought up the level in the beginning section, to make a stronger entrance after the end of the previous song. The end result was about a +2.0 LUFS increase.

So there you have it: you can win the loudness wars—but with a bloodless coup that doesn’t squash your audio.

Shimmer Reverb

As reverb transitions from being simply a way to emulate an acoustic space to an effect in its own right, new reverb designs are becoming more popular. One of these is “shimmer” reverb, which uses pitch shifting before the reverb to add high-frequency content. Some of these recirculate the pitch-shifted output back to the input; although this implementation doesn’t do that, it still gives a solid shimmer effect—check out the audio examples.

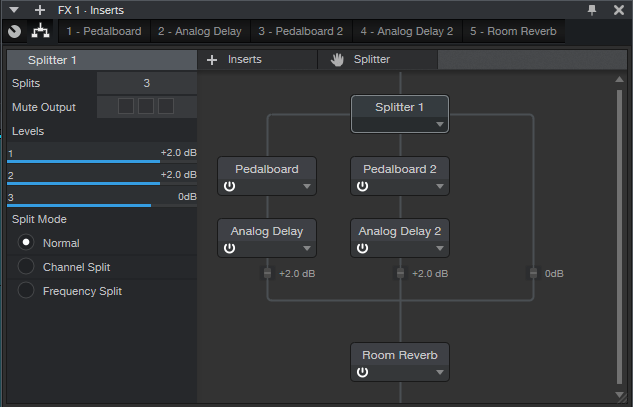

After hearing the examples, you’ll probably want to download the multipreset included for Pro users. If you’re an Artist user and have the Ampire add-on, you can do this effect by using buses. Fig. 1 shows the Shimmer Reverb’s block diagram.

The Splitter has three splits. Two go to a Pedalboard with a Pitch Shifter module, set for an octave higher shift (fig. 2). The third provides a dry signal.

Because the fidelity drops off with extreme transposition, having two Pitch Shifters in parallel gives a smoother sound. Note that Mix Harmony is set full up, and Harmony Detune is up halfway. Feel free to experiment with the Detune parameter.

To smooth the sound further, an Analog Delay (fig. 3) follows each Pitch Shifter. They have identical settings, except that one is set for 31 ms of delay, and the other for 23 ms.

You’ll need to set the wet/dry balance in the FX Chain itself, using the level sliders for the three splits. Or, eliminate the dry split, and use this only as a bus or FX Channel effect.

And that’s all there is to shimmering your sound. Happy ambiance!

Download the Shimmer Reverb multipreset here.

The Really Grand Piano

Having worked on several classical and piano-oriented sessions, I’ve had the opportunity to hear gorgeous grand pianos in their native habitat. But it spoiled me. When I had to use sampled pianos in other types of productions, it always seemed something was missing.

This tip puts some of the low-end mojo back into sampled pianos. Sure, it’s done with smoke and mirrors, not by having wood interact with a room—but check out the audio example at the end, and you’ll hear what Beethoven has to say about it.

How It Works

The bass enhancement occurs by mixing a sine wave behind the main piano sound, but only in the lower octaves, and very subtly. This adds bass reinforcement that you won’t find in samples.

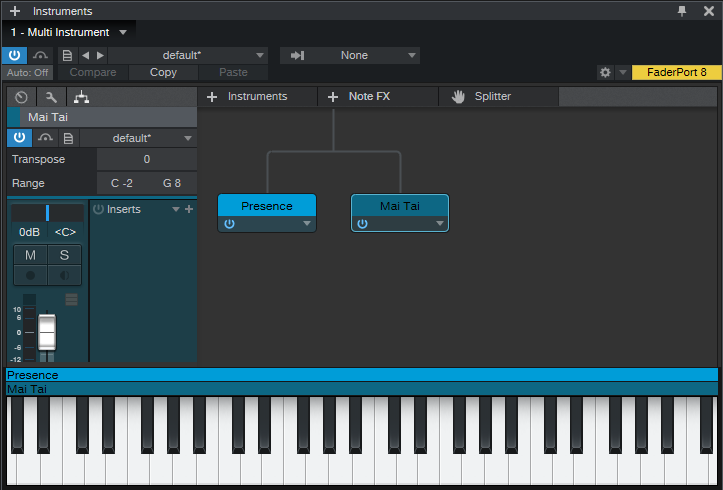

Set up a Multi-Instrument (sorry Artist users, this is a Pro version-only feature) that combines the piano of your choice, like the Presence Acoustic Full, and Mai Tai (fig. 1).

For Mai Tai, you want the simplest sound possible—one sine wave oscillator, no modulation except for an amplitude envelope, no random phase, and no effects other than EQ. By turning the Filter cutoff down to around 100 Hz or so, turning Key tracking all the way down, and using the EQ (in the bass range) to take out all the highs, we now have the sine wave tracking your playing on only the lowest notes (fig. 2).

Tweaking

The Mai Tai’s level setting is crucial. You want an almost subliminal effect—something you don’t notice unless you mute the Mai Tai. Check out this audio example, but note that I’ve mixed the Mai Tai up higher than I normally would, so you can hear what the sine wave adds to the piano sound. Also note that even with the extra emphasis on the lower octaves, you can’t hear an added sine wave on the higher notes. This is important for a realistic sound.

Finally, although I’ve emphasized using this with piano, the same technique can add a commanding low end to other sampled instruments, like acoustic guitar—yes, you can change your parlor guitar’s body into a jumbo—no woodworking required!

Attack Delay—Done Right!

The Attack Delay effect, used primarily with guitar, fades in a note or chord over the initial attack to give a more pad-like sound. The effect feeds audio into a gate with an attack time, and triggers the gate when a note or chord hits.

However, you need a brief silence between notes or chords (I prefer using this with chords), so the gate can reset prior to initiating the next attack. It’s kind of annoying to have to modify your playing style to accommodate this pause. Also, if the gate threshold is too high, you won’t hear any note—and if it’s too low, you might lose the attack effect. Attack Delay stompboxes can be iffy, which may be one reason why you don’t see one on every pedalboard.

Nonetheless, this can be a beautiful effect when done right…and as the audio example shows, Studio One can do it right.

Attack Delay Setup

The key is to insert the Gate in the track you want to process, but not trigger the Gate from that track. Instead, you create a copy of the original track, and optimize it for triggering the Gate. The copy then controls the Gate through its sidechain (you don’t listen to the copied track).

(Optionally, before setting this up, consider compressing or limiting the original guitar track so that it has a longer sustain. You don’t want the guitar to fade too much before the attack fades in.)

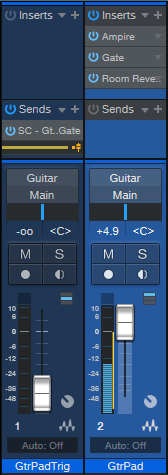

Fig. 1 shows the mixer setup. The GtrPadTrig track’s pre-fader send goes to the Gate’s sidechain. Turn down this track’s channel fader, because we don’t want to hear the copied track. The guitar track in the audio example inserts Ampire before the Gate, and reverb after the Gate. The reverb adds an ethereal quality as the guitar fades into the chord.

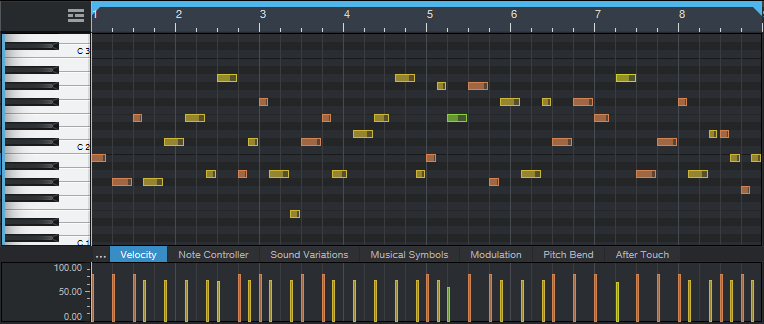

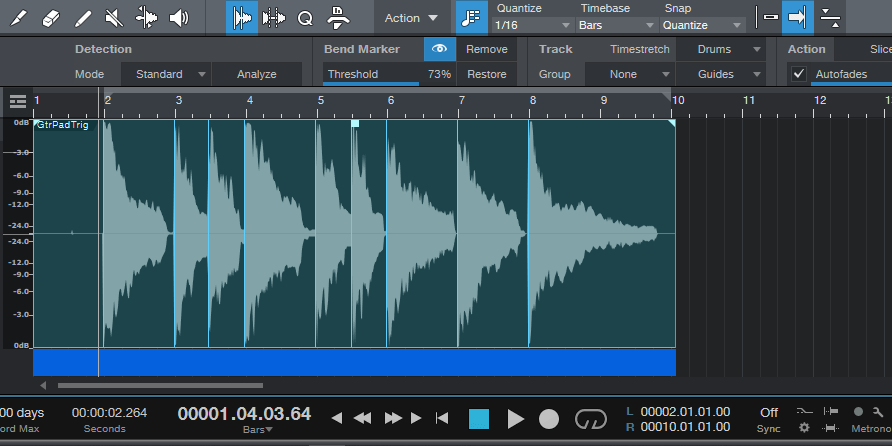

Next, prep the control track in the Edit window. Open the Audio Bend panel (to the right of the speaker icon in the Edit window toolbar), right-click on the Event, and choose Detect Transients. If necessary, adjust the Bend Marker Threshold (or remove and add Bend Markers) so that Bend Markers appear only at the beginning of chords or notes (fig. 2).

Figure 2: The beginning of each chord has a Bend Marker. This shows the waveform prior to splitting.

Mind the Gap

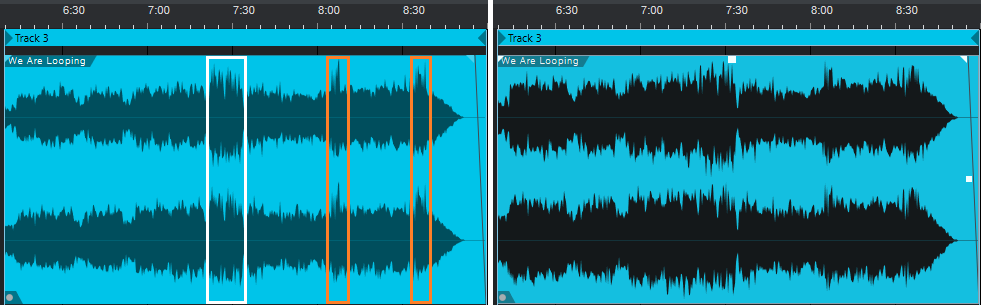

Right-click on the Event, and choose Split at Bend Markers. All the Events will be separate and selected. Click on the right edge of any Event, and drag to the left. Because all the Events are selected, this opens up a gap before all the chord attacks (fig. 3).

Now start playback, and adjust the Gate parameters. This is a little tricky at first, because you want the Threshold set so that triggers coming in from the sidechain open the Gate, coupled with a Release time that’s short enough so that the Gate doesn’t shut off immediately. I usually leave about a 100 ms gap between chord attacks, and set the Gate release time to 60 ms. Your mileage may vary.

If the triggering isn’t reliable, adjust the Threshold, gap length, or Release. To edit the gap, select all the events and vary the right edge of one of them—they’ll all move together. Sometimes, there might be one obstinate note that doesn’t trigger correctly, in which case you can select only the Event before it, and vary its gap for reliable triggering with the next chord.

Yes, this takes a little effort to set up, but it’s cool. Besides, there’s nothing wrong with exploring an effect that remains somewhat rare, because it’s hard to get right—fortunately, Studio One can get it right.