Tag Archives: Craig Anderton

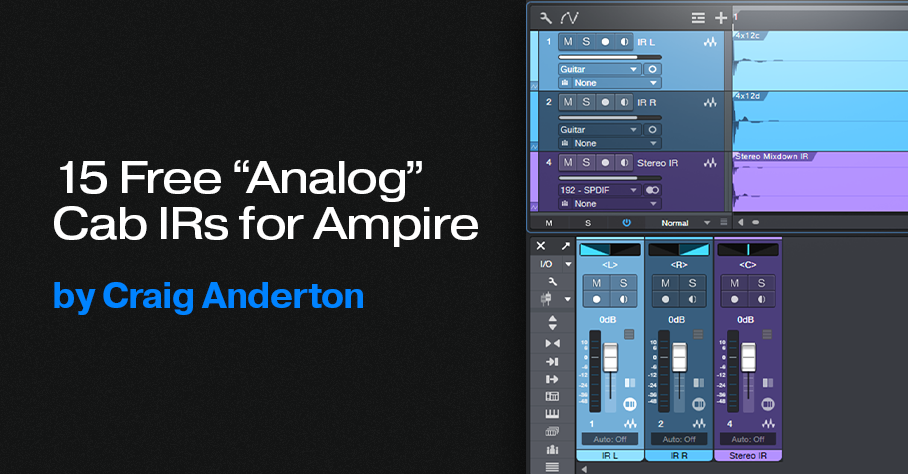

15 Free “Analog” Cab IRs for Ampire

This week, I wanted to give y’all a little gift: 15 “analog cab” IRs that provide alternate User Cabinet sounds for Ampire. Just hit the download link at the bottom, and unzip.

If you’re not familiar with the concept of an analog cab, it’s about using EQ (not the usual digital convolution techniques) to model a miked cab’s response curve. This gives a different sonic character compared to digitally-generated cabs. (For more information, see Create Ampire Cabs with Pro EQ2.) An analogy would be that convolution creates a photograph of a cab, while analog techniques create a painting.

The 15 impulse responses (IRs) in the Ampire Analog Cab IRs folder were made by sending a one-sample impulse through EQ, and rendering the result. This process creates the WAV file you can then load into Ampire’s User Cabinet. The IRs include the following cab configurations: 1×8, 1×10, (4) 1×12, (3) 2×12, 4×10, and (5) 4×12.

How to Use Analog Cabs

- The simplest application is dragging an analog cab IR into Ampire’s User Cabinet image.

- To create cab stacks, insert different cabs in the User Cabinet’s three Mic slots. Vary their mix with the Mic Edit Controls.

- Layer two Ampires, one with a convolution-based cab impulse, the other with an analog cab impulse. This gives a “best of both worlds” sound.

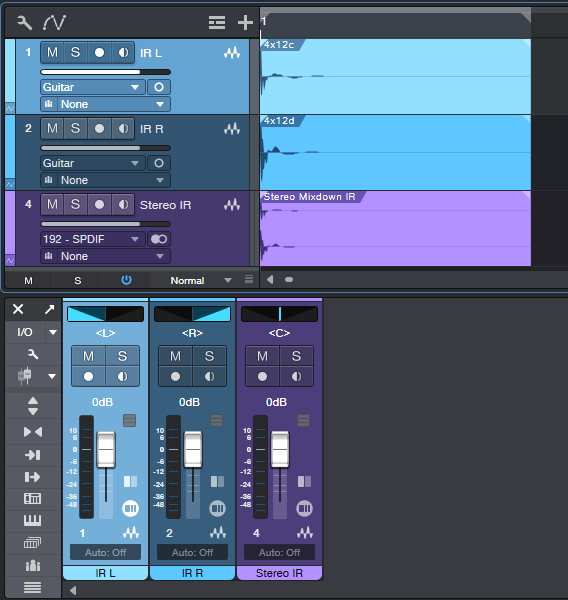

- Create stereo analog cabs that work with a single Ampire User Cabinet. Insert different analog cab IRs in two tracks, pan them oppositely, then export the mix (fig. 1 shows a typical setup). Drag the Event created by the export into Ampire’s User Cab. Note that the impulse response WAV files are very short—only 2,048 samples.

In any event, whether you go for individual impulses, layering, or creating stereo impulses, I think you’ll find that “analog” cab IRs extend Ampire’s sonic palette even further. And if you have any questions, or feedback on using analog cabs, feel free to take advantage of the Comments section!

Download the Ampire Analog Cab IRs.zip file below:

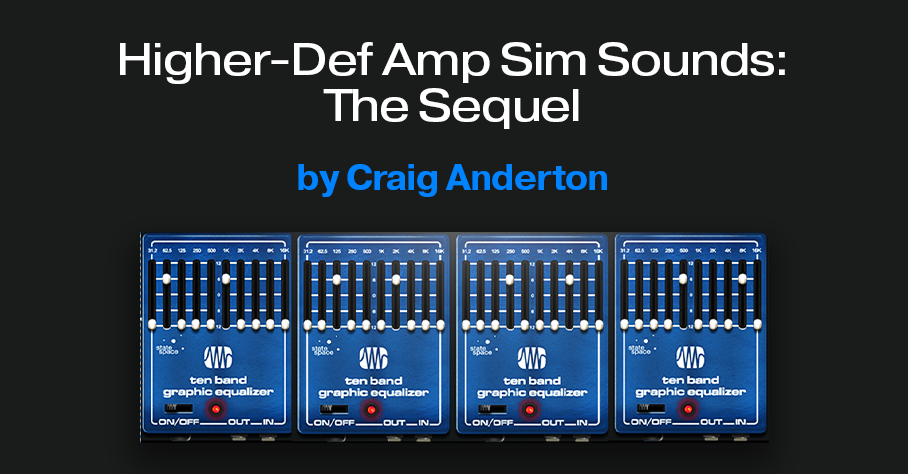

Higher-Def Amp Sim Sounds: The Sequel

Many of these tips have their genesis in asking “What if?” That question led to the Higher-Def Amp Sim Sounds blog post, which people seemed to like. But then I thought “What about taking this idea even further?” Much to my surprise, it could go further. This week’s tip, based on the Ampire High Density pack, is ideal for increasing the definition and articulation of high-gain and metal amp sims.

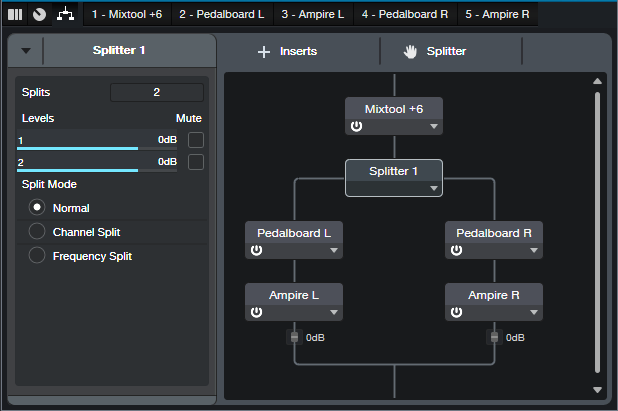

Fig. 1 shows the FX Chain (the download link is available at the end of this post). The Splitter is in Channel Split mode. If your guitar track is mono so it doesn’t have two channels, change the track mode to stereo and then bounce the Event to itself. This creates a dual mono track, which is optimum for this application.

With traditional multiband processing, each band represents a range of frequencies. Distorting a limited range of frequencies reduces intermodulation distortion. The result is a more defined, articulated sound quality.

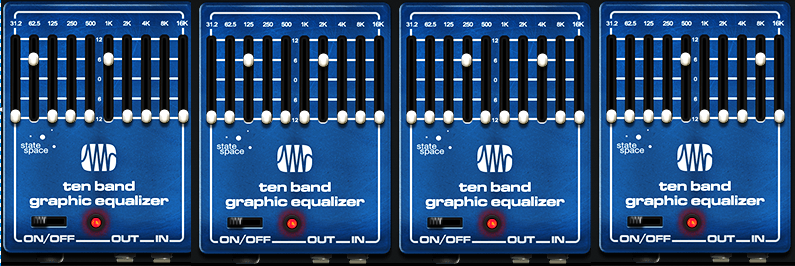

Fig. 1 implements a variation on multiband processing. It has four amps, but inserts Ampire’s Ten Band Graphic Equalizer before each amp. The graphic EQ sends two narrow frequency bands into each amp. Choosing frequency bands that are as far apart as possible reduces intermodulation distortion even further than standard multiband processing.

Referring to fig. 2, two bands in each graphic EQ are at +6 dB. The others are all at 0. Note how the various EQs offset the bands to different frequencies.

The Dual Pan plug-ins create a stereo image. With a traditional multiband setup, I tend to pan the low- and high-frequency bands to center, and spread the lower mids and upper mids in stereo. That doesn’t apply here, because there aren’t wide frequency ranges. Use whatever panning gives a stereo image you like.

A waveform is worth a thousand words, so check out the audio example. The first half is guitar going through Ampire’s German Metal amp sim. The second half uses this technique, with the same guitar track and amp sim settings. I think you’ll hear quite a difference.

Can This Be Taken Even Further?

Yes, it can—I also tried using eight splits. Because the Splitter module handles a maximum of five splits, I duplicated (complete) the track with the FX Chain, and fed both tracks with the same guitar part. The 31.2 Hz and 16 kHz bands aren’t particularly relevant, so I ignored those and fed one band from each EQ into an amp. As expected, this asks quite a bit of your CPU. Consider transforming the track to rendered audio (and preserving the realtime state, in case you need edits in the future).

However, I’m not convinced I liked the sound better. That level of definition seemed a little too clean for a metal amp sim. Sure, give it a try—but I feel the setup in this tip is the sweet spot of sound quality and convenience.

Download the FX Chain below!

Making Sense of Custom Colors

Over four years ago, the blog post Colorization: It’s Not Just about Eye Candy covered the basics of using color. However, v6.1’s Custom Colors feature goes way beyond Studio One’s original way of handling colors.

The Help Viewer describes Custom Color operations, so we’ll concentrate on the process of customizing colors efficiently for your needs. For example, my main use for colors is to differentiate different track types (e.g., drums, synth, loops, voice, guitar, etc.). Then, changing the color’s brightness or saturation can indicate specific attributes within a track group, like whether a track is a lead part or background part, or whether a part is finished or needs further editing.

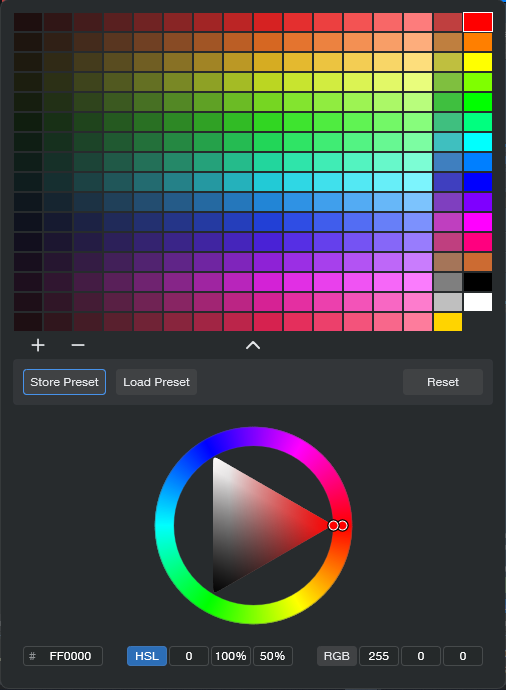

Opening the Custom Colors window and seeing all those colors may seem daunting. But as you’ll see, specifying the colors you want is not difficult.

What Are Hex, HSL, and RGB?

Electronic displays have three primary colors—red, green, and blue. Combining these produces other colors. For example, combining red and blue creates purple, while combining green and blue creates cyan. The three fields at the bottom of the expanded Custom Colors window (fig. 1) show the three main ways to define colors (left to right): Hex, HSL (Hue, Saturation, Lightness), and RGB (Red, Green, Blue). These are simply three different ways to express the same color.

RGB uses three sets of numbers, from 0 to 255, to express the values of Red, Green, and Blue. 255, 0, 0 would mean all red, no green, and no blue.

Hex strings together three sets of two hex digits. The first two digits indicate the amount of red, the second two the amount of green, and the final two the amount of blue.

HSL is arguably the most intuitive way to specific custom colors, so that’s the option selected in fig. 1.

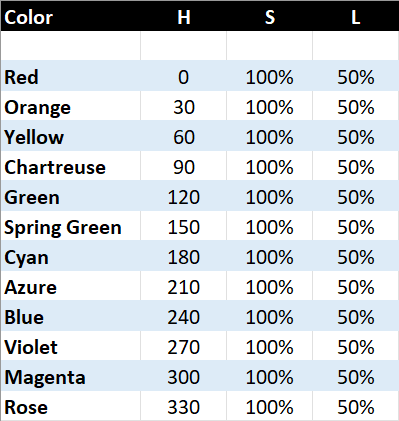

You can think of the spectrum of colors as a circle that starts at red, goes through the various colors, and ends up back at red. So, each color represents a certain number of degrees of rotation on that wheel. The number of degrees corresponds to the Hue (color), represented by the H in HSL. Each main color is 30 degrees apart along the wheel:

S represents the amount of saturation, from 0 to 100%. This defines the color’s vibrancy—with 100% saturation, the color is at its most vibrant. Pulling back on saturation mutes the color more. L is the luminance, which is basically brightness. Like saturation, the value goes from 0 to 100%. As you turn up luminance, the color becomes brighter until it washes out and becomes white. Turn luminance down, and the color becomes darker.

The Payoff of Custom Colors

Here’s why it’s useful to know the theory behind choosing colors. As mentioned at the beginning, I use two color variations for each group of tracks. For example, vocal tracks are green. I wanted bright green for lead vocals, and a more muted green for background vocals. For the bright green color, I created a custom color with HSL values of 120, 100%, and 50%. For the alternate color, I used the same values except for changing Saturation to 50%.

Fig. 2 shows the custom color parameter values used for the 12 main track groups. The right-most column in fig. 1 shows the main track group colors. The next column to the left shows the variation colors, which have 50% saturation. In the future, I’ll be adding more colors to the 12 original colors (for example, brown is the 13th color down from the top in fig. 1’s custom colors). Fortunately, the custom color feature lets you save and load presets.

The brain can parse images and colors more quickly than words, and this activity occurs mostly in the brain’s right hemisphere. This is the more intuitive, emotional hemisphere, as opposed to the left hemisphere that’s responsible for analytical functions like reading words. When you’re in the right hemisphere’s creative zone, you want to stay there—and v6.1’s track icons and custom colors help you do that.

But Wait…There’s More!

Don’t forget that Studio One also has overall appearance preferences at Options > Appearance. This is kind of like a “master volume control” for colors. If you increase contrast, the letters for track names, plugins, etc. really “pop.” For my custom colors, increasing the overall Luminance emphasizes the difference between the main track color and the variation track color.

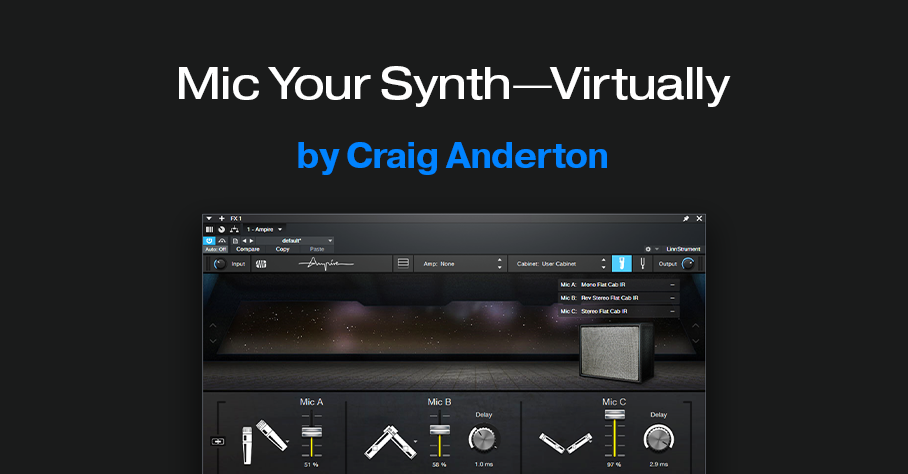

Mic Your Synth—Virtually

You can mic piano, drums, guitar, voice, and other acoustic sources…but you can’t mic a synth, unless you put it through a PA or guitar amp, and then mic that.

Or can you?

Studio One’s Ampire has mic modeling for its various cabs. However, a cab has its own frequency response, which doesn’t sound at all like miking an instrument—it sounds like miking a guitar amp. Sometimes, you want that sound with instruments other than guitar or bass. But usually, you don’t.

Ampire also has a User Cab for loading your own cab impulse responses. So, you could load a room’s impulse response instead, and set up Ampire’s mics. However, then you’re not miking an instrument, you’re miking the instrument in a room. What if you just want the sound of a miked instrument?

Here’s the solution, and I think you’ll be as surprised as I was after pulling up an FX bus fader with the sound of the virtual mics. Check out the audio example: the first half is a plain Mai Tai sound, the second half has the virtual miking. There are no effects, only Ampire’s mics. Of course, this is only one of many possibilities.

How It Works

The trick is to bypass the amp, and load a flat-response impulse into Ampire’s User Cab. Then, the audio doesn’t go through an amp or cab sound before hitting the mics. Simple, right?

The downloadable zip file (link at end) includes three flat-response IRs, each of which has its uses:

- Stereo (dual mono)

- Stereo with reversed left channel phase

- Stereo with reversed right channel phase

Ampire Prep

I prefer to set up Ampire in an FX bus, to enable blending the miked sound with the direct sound. However, using the miked sound by itself is viable. See which you prefer. Fig. 1 shows the Ampire settings used for the audio example.

To create the setup:

1. Insert Ampire in an FX bus.

2. Assign a Send to the FX bus from the instrument track you want to “mic.”

3. With Ampire, choose Amp: None and Cabinet: User Cabinet

4. Download and unzip the three impulse responses.

5. Click on the Mic Edit Controls button (the blue button in fig. 1), then click on the + sign next to Mic A. Navigate to your IR of choice, and load it. Or, simply drag the impulse into the Mic’s slot.

6. Similarly, load an IR into Mic B and Mic C. Note: There must be an IR loaded in Mic A, or no sound will pass through Ampire, even if IRs are loaded in Mic B and Mic C. I recommend loading an IR in each one.

7. Go down the fun rabbit hole of mic choices, levels, mic delays, and phase changes.

Extra Tips

- Using an IR with reversed phase for one of the mics can unbalance the stereo image. Compensate by adjusting the Panpot in the Send that goes to the FX Channel.

- In the FX Channel, spreading the stereo image with the panpot’s Binaural function is cool (pre-Studio One 6 owners can use the Binaural Pan plug-in). For the audio example, I turned up the Binaural Pan so the effect would be more dramatic if heard over laptop speakers.

- The audio example mixes the miked sound up quite a bit to get the point across, but subtle settings can add an interesting dimension to synthesized sounds.

- Preceding Ampire with an Analog Delay set for a very short delay (a few milliseconds) and no feedback can enhance the effect further.

- This isn’t just about synths—try this technique with other non-acoustic sounds, like analog drums.

Reminder!

If you bought a previous version of The Huge Book of Studio One Tips and Tricks, you can now download the free version 1.4 update (with 250 tips and 126 free presets) from the PreSonus shop.

Quick, Perfect S-Shaped Fades

First, some news: the free update to version 1.4 of The Huge Book of Studio One Tips and Tricks is finally available in the PreSonus Shop. If you purchased a previous version from PreSonus, simply download the eBook again, using the same credentials—you’ll automatically receive the latest version. Now for the tip…

What’s Cool About S-Shaped Fades, Anyway?

An S-shaped fade starts by fading slowly, accelerates to halfway through the fade, then slows down to do the final part of the fade. This provides a natural, smooth kind of fade that I prefer for most song fadeouts. Also, video tracks commonly use S-shaped fades. So, an S-shaped fade for the audio can match an S-shaped fade applied to the video.

Before version 5.5, the Project Page didn’t have automation or a gain envelope, so I wrote a tip on how to incorporate an S-shaped fade in a Song’s master level automation. Once the song had the fade, you could update the Mastering file to include the fade. But now that the Project Page can do automation and gain envelopes, you can apply that tip directly to Events in the Project Page as well as in the Song page.

However, compared to the technique this post covers, the previous method has one advantage and one disadvantage. The advantage is that it’s easy to customize the S-fade shape. The disadvantage is that it’s more time-consuming to get a perfect S-shape. So, here’s how to add a perfect S-shaped fade—quickly—with the Paint tool and its Parabolic option:

1. Add a Gain Envelope (right-click on an Event and check Gain Envelope). You can also apply this kind of fade to master level automation.

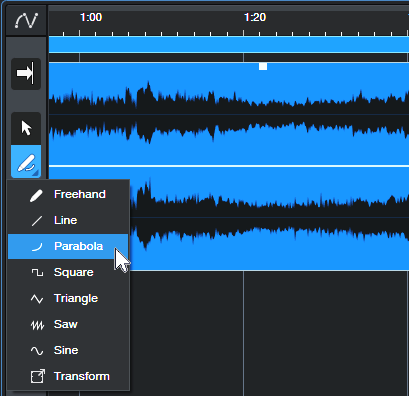

2. Choose the Parabola shape for the Pencil tool (fig. 1).

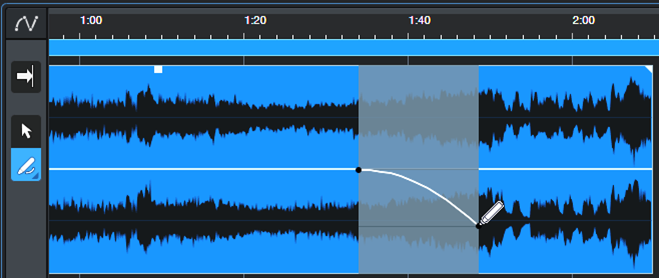

3. Click where you want the fade to begin. Drag right, and then down to where the fade’s midpoint should be (fig. 2).

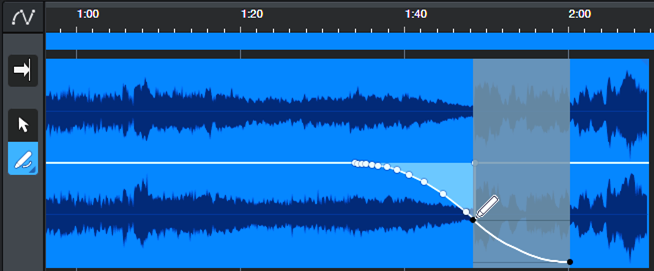

4. Finally, click where you want the fade to end. Drag left, and then up to the fade’s midpoint (fig. 3).

And now, you have your perfect S-shaped fadeout. Delete any nodes (if present) to the right of where the fade ends—and your work is done.

Acoustic Guitar Simulator

Last week’s guitar-oriented tip seemed to go over well, so I figured y’all might like a follow-up. This takes advantage of Version 6’s Track Presets and the dynamic EQ aspect of the Pro EQ3.

Let’s Manage Expectations…

First, it won’t make your electric guitar sound like a vintage Martin D-28—sorry. The goal is to use an electric guitar to give the same kind of vibe an acoustic guitar would provide in a mix. Or, if you gig with Studio One, you would no longer need to bring an acoustic guitar just for one or two songs. Leave it at home—this does what you need.

Second, the settings are rather critical. In general, single-coil pickups (in the middle position) give the best results. The downloadable Track Preset was designed for a Telecaster. For other guitars, pickups, different input levels, playing styles, and anything with humbuckers, you’ll likely need to edit the Pro EQ3 settings.

Here’s an audio example of the acoustic guitar simulator preset in action. You’ll hear the sound being processed by the acoustic guitar simulator, and then the original guitar sound for reference.

How It Works

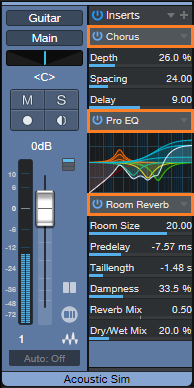

Aside from creating a more acoustic guitar-like EQ curve, the dynamic EQ provides two important tone-shaping functions (fig. 1).

The HF band increases brightness as you play harder, which acoustic guitars do naturally. The LF band simulates how playing harder “excites” an acoustic guitar’s body, which produces more level at the frequency where the body resonates. I chose 155 Hz, but that’s not a given. It just sounded right to me.

Fig. 2 shows the complete Track Preset, which adds a couple of fairly subtle effects (Chorus and Reverb). This enhances the “acoustic-guitar-within-an-acoustic-space” feel.

Finally, note that this sound can layer well with a physical acoustic guitar. The combined sound often has more animation than if you just added another track of the same acoustic guitar.

Click link below to download the Acoustic Guitar Simulator.trackpreset!

Higher-Def Amp Sim Sounds

My sonic holy grail is “clean distortion,” which is why I like 3- or 4-band multiband presets. Splitting the audio into bands decreases the potential for nasty intermodulation distortion. This tip’s technique implements a simpler, 2-band option. Yet it increases definition considerably, and reduces the “wooly,” or “splattering” quality that most amp sims have. Of course, this works with Ampire, but you’ll find it benefits other amp sims too. The audio example speaks for itself.

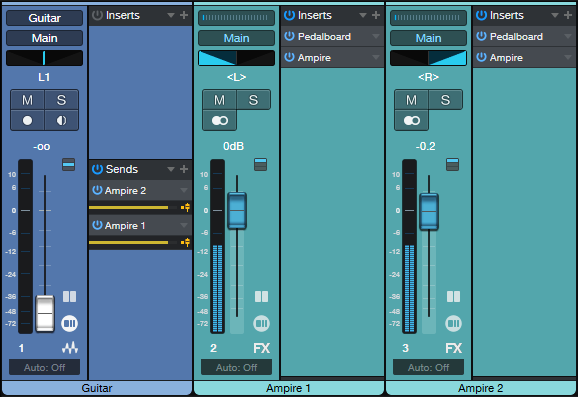

The setup uses two amp sims, with a Pedalboard inserted in front of each one. Each Pedalboard has only one effect—the High Density pack’s Blue EQ graphic equalizer. (You can use the stock graphic equalizer instead, but the results aren’t as good as using the Blue EQ.) Fig. 1 implements this technique with a Splitter and FX Chain in Studio One Pro, while fig. 2 shows the track layout for Studio One Artist.

What makes this technique so effective is that the EQs send alternate bands into the two amps (fig. 3). Usually, the interference among all the frequencies feeding an amp sim creates non-harmonic, intermodulation distortion. Leaving gaps in the frequencies sent to each amp reduces their interference with each other. Mixing the two amp outputs together restores the full frequency response.

Because there’s less overall signal going into the amps, the Mixtool in fig. 1 provides a +6 dB boost to compensate. In fig. 2, setting the Send controls to +6 dB provides the desired boost.

Another cool feature is panning the two amps left and right. With the Splitter in fig. 1, simply use Channel split instead of the Normal split. In fig. 2, note that the two FX Channels are panned hard left and right. This gives a cool stereo image that a single amp can’t deliver.

But, hearing is believing—and I think you’ll be blown away by the audio example. The first part uses this technique with the default VC30 amp sound and the 2 x 12 VC 30 cab. The second part uses identical amp and cab settings, but without the Graphic EQ preceding the amp. Note that it sounds a lot dirtier. The third part uses this tip’s technique, but with the two amps spread in stereo. The sonic benefits are even more apparent when actually playing live, or in context with a mix, but this example should get the point across.

Yes, it really is that easy to transform your amp sims into higher-def versions. Have fun!

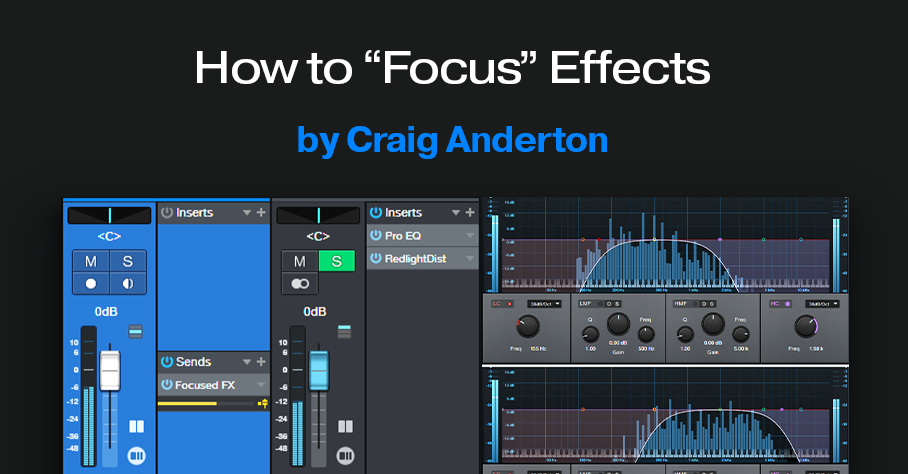

How to “Focus” Effects

There’s nothing new about using an FX Channel to add an effect in parallel to a main track. But we can make effects even more effective by “tuning” them, to provide more focus.

This process works by inserting a Pro EQ3 before an FX Channel effect or effects (fig. 1). Then, use the EQ’s Low Cut and High Cut filters to tune a specific frequency range that feeds the effect. For example, I’ve mentioned restricting high and low frequencies prior to feeding amp sims, but we can use this focusing technique with any processor.

There are several reasons for placing the Pro EQ3 before the effect. With saturation effects, this reduces the possibility of intermodulation distortion. With other effects, reducing the level of unneeded frequencies opens up more headroom in the effect itself. Finally, with effects that respond to dynamics (autofilter, compressor, etc.), you won’t have frequencies you don’t want pushing the frequencies you do want over the processor’s threshold.

Here are some specific examples to help get your creative juices flowing.

Distortion or Saturation with Drums

The audio example plays four measures of drums going into the RedlightDist, with no focus. The next four measures focus on the high frequencies. This gives an aggressive “snap” to the snare. The next four measures focus on the low frequencies, to push the kick forward.

Fig. 2 shows the tunings for the high- and low-frequency focus.

Reverb with Guitar

The audio example plays four measures of midrange-frequency focus feeding reverb, then four measures using a high-frequency focus. Focusing is helpful with longer reverb times, because there are fewer frequencies to interfere with the main sound.

Fig. 3 shows the tunings for the midrange- and high-frequency focus filters.

Delay with Synth Solo

For our last example, the first five measures are synth with no focus. The next five measures focus on the lower frequencies. The difference is subtle, but it “tucks away” the reverb behind the solo line. The final five measures focus on the high frequencies, for a more distant echo vibe.

Fig. 4 shows the tunings for the midrange- and high-frequency focus filters.

These are just a few possibilities—another favorite of mine is sending focused frequencies to a chorus, so that the chorus effect doesn’t overwhelm an instrument. Expanders also lend themselves to this approach, as does saturation with bass and electric pianos.

Perhaps most importantly, focusing the effects can give a less cluttered mix. Even tracks with heavy processing can stand out, and sound well-defined.

Better Autofilter Control

The March 2020 blog post, Taming the Wild Autofilter, never appeared in any of The Huge Book of Studio One Tips & Tricks eBook updates. This is because the tip worked in Studio One 4, but not in Studio One 5. However, Studio One 6 has brought the Autofilter back to its former glory (and then some). Even better, we can now take advantage of FX Bus sends and dynamic EQ. So, this tip is a complete redo of the original blog post. (Compared to a similar tip in eBook version 1.4, this version replaces the Channel Strip with the Pro EQ3 for additional flexibility.)

The reason for coming up with this technique was that although I’d used the Autofilter for various applications, I couldn’t get it to work quite right for its intended application with guitar or bass. Covering the right filter cutoff range was a problem—for example, it wouldn’t go high enough if I hit the strings hard, but if I compensated for that by turning up the filter cutoff, then the cutoff wouldn’t go low enough with softer picking. Furthermore, the responsiveness varied dramatically, depending on whether I was playing high on the neck, or hitting low notes on the low E and A strings. This tip solves these issues.

The guitar track’s audio uses pre-fader sends to go to two FX Buses (fig. 1). The Autofilter Out FX Bus produces the audio output. The Autofilter Trig FX bus processes the audio going to the Autofilter’s sidechain. By processing the Guitar track’s send to the sidechain, we can make the Autofilter respond however we want. Furthermore, if needed, you can feed a low-level signal from the Guitar track’s pre-fader send into the Autofilter, to avoid distortion with high-resonance settings. This is possible because the Autofilter Trig bus—which you don’t need to hear, and can be any level you want—controls the Autofilter’s action.

Perhaps best of all, this also means the Autofilter no longer depends on having an input signal with varying dynamics. You can insert an amp sim, overdrive, compressor, or anything else that restricts dynamic range in front of the Autofilter. The Autofilter will still respond to the original Guitar track’s dynamics, as processed by the dynamic EQ.

The Pro EQ3 (fig. 2) conditions the send to make the Autofilter happy. The dynamic EQ attenuates lower frequencies that exceed the Threshold, but amplifies higher frequencies that exceed the Threshold. So, the Autofilter’s response to the higher-output, lower strings can be consistent with the upper strings.

The Autofilter (fig. 3) sets the LFO Cutoff Modulation to 0, because I wanted only the envelope to affect the filter. The settings for the Autofilter and Pro EQ3 interact with each other, as well as with the guitar and pickups. In this case, I used a Telecaster with a single-coil treble pickup. For humbucking pickups, you may need to attenuate the low frequencies more.

Like Autofilters in general, it takes some experimenting to dial in the ideal settings for your playing style, strings, pickups, musical genre, and so on. However, the big advantage of this approach is that once you find the ideal settings, the response will be less critical, more consistent, and more forgiving of big dynamic changes in your playing.

And here’s a final tip: Processing the signal going to the Autofilter’s sidechain has much potential. Try using Analog Delay, X-Trem, and other effects. Also, although the original Guitar track and Autofilter Trig faders are shown at 0, no law says they have to be. Feel free to mix in some of the original guitar sound, and/or the equalized Autofilter Trig bus audio.

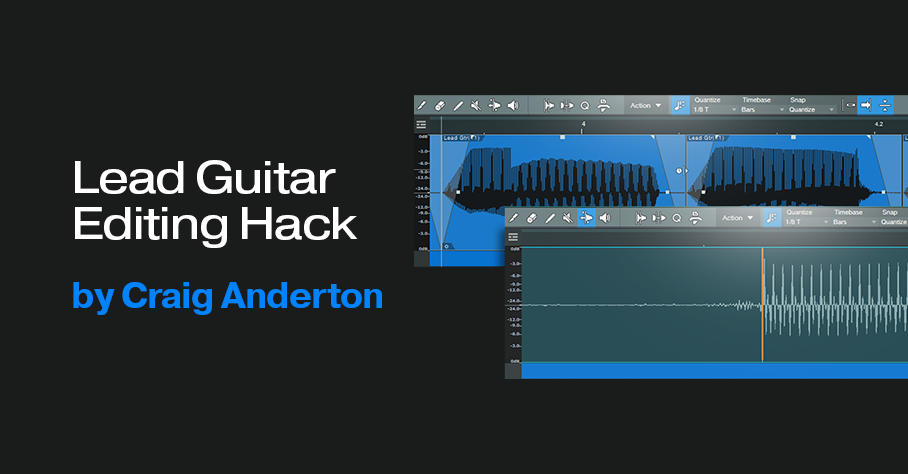

Lead Guitar Editing Hack

High-gain distortion is great for lead guitar sustain and tone, but it also brings up that “splat” of pick noise at the note’s beginning. Sometimes, you want the gritty, dirty feel it adds. But it can be a distraction when your goal is a gentler, more lyrical tone that still retains the sound of heavy distortion.

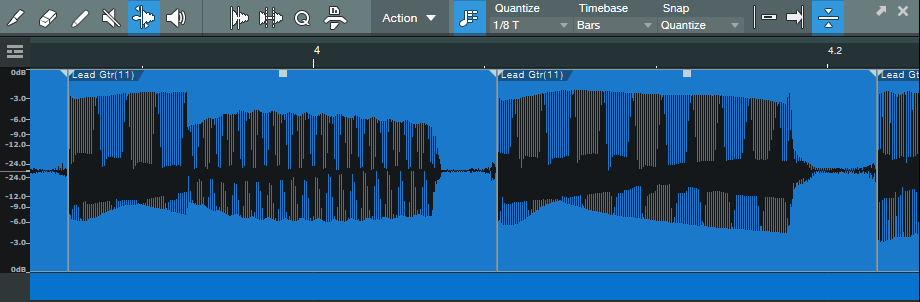

This technique gives the best of both worlds for single-note leads, and is particularly effective with full mixes where the lead guitar has a lot of echo. Normally the echo will repeat the pick noise, so reducing it reduces clutter, and gives more clarity to the mix.

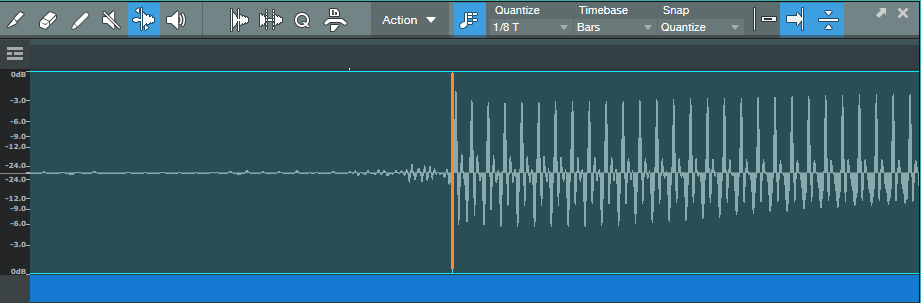

1. Open the lead part in the Edit window.

2. Choose Action, and under the Audio Bend tab, select Detect Transients.

3. Zoom in to verify there’s a Bend Marker at the beginning of each note’s first peak (fig. 1). If you need to add a Bend Marker, click at the note’s beginning using the Bend tool. To move a Bend Marker for more precise placement, hold Alt/Opt while clicking on the marker with the Bend tool, and drag.

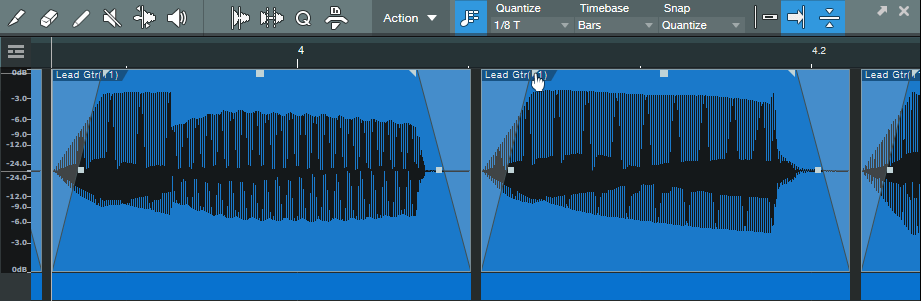

4. Choose Action, and under the Audio Bend tab, select Split at Bend Markers. Now, each note is its own Event (fig. 2).

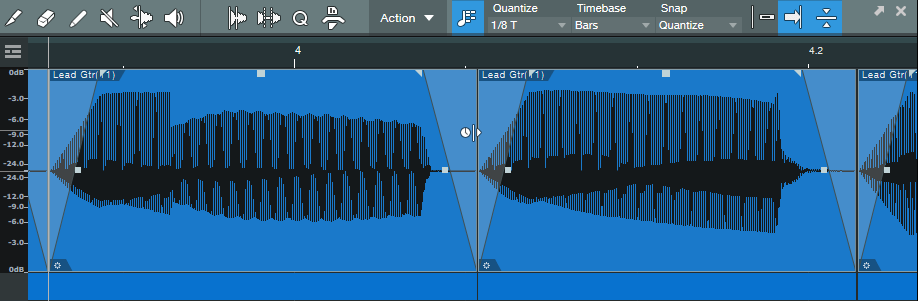

5. Make sure all the notes are selected (fig. 3). The next steps show any needed edits being made to one Event. However, because all the notes are selected, any edit affects all notes equally. To show the edits in more detail, the following steps zoom in on two notes.

6. Trim the note ends to remove some of the pre-note “dirt” (fig. 4).

7. Add a fade-in and fade-out (fig. 5). This doesn’t have to be exact, because you’ll optimize the times in step 9.

8. There’s a gap between notes, so time-stretch the end of the note to cover the gap. Alt/Opt+click on the end of a note, and drag to the right until the note end is up against the beginning of the next note (fig. 6).

9. That may seem like a lot of work, but once you’ve defined the bend markers, having to edit only one note to edit all the notes speeds the process.

Start playback with all the notes still selected, listen, and vary the fade times. Also experiment with the curve shape. A concave curve can work well with attacks. I often try for the minimum amount of attack and decay that gives the desired result, but not always—when taken to extremes, being able to shape notes enables options that sound almost like a synthesizer.

The audio example shows how this tweak affects a single-note lead. The first part is as recorded, the second part uses this tip.